LPRNet (License Plate Recognition)

1. Introduction

LPRNet (License Plate Recognition via Deep Neural Networks) is a lightweight convolutional neural network that can perform end-to-end license plate recognition without character segmentation.

The advantages of LPRNet can be summarized in the following three points:

(1) LPRNet does not require character pre-segmentation, has high license plate recognition accuracy, strong real-time algorithm performance, and supports variable-length character license plate recognition. It can perform end-to-end training for license plates from different countries with large character differences.

(2) LPRNet is the first real-time lightweight OCR algorithm without RNN, and can run on various devices including embedded devices.

(3) LPRNet has good robustness. Even under complex conditions such as view angle and camera distortion, and poor lighting conditions, it still shows good recognition effects.

Project Directory

LPRNET

│ libsophon_soc_0.5.1-LTS_aarch64.tar.gz #Compilation required dependencies

│ sophon-mw-soc_0.12.0_aarch64.tar.gz #Compilation required dependencies

│ stream_dev.tar #Cross-compilation environment image

│

├─cpp ##C++ example required files

│ ├─dependencies

│ │ ├─include

│ │ │ bmnn_utils.h

│ │ │ bm_wrapper.hpp

│ │ │ ff_decode.hpp

│ │ │ json.hpp

│ │ │ utils.hpp

│ │ │

│ │ └─src

│ │ ff_decode.cpp

│ │

│ ├─lprnet_bmcv ##Files required for bmcv

│ │ CMakeLists.txt

│ │ lprnet.cpp

│ │ lprnet.hpp

│ │ main.cpp

│ │

│ ├─lprnet_opencv ##Files required for opencv

│ │ CMakeLists.txt

│ │ lprnet.cpp

│ │ lprnet.hpp

│ │ main.cpp

│

├─datasets ##Test dataset

│ └─test

│

├─docs #Tutorial documentation

│ │ LPRNet.md

│ │

│ └─images

│

├─models #Model weights

│ └─BM1684X

│ lprnet_fp16_1b.bmodel

│ lprnet_fp32_1b.bmodel

│ lprnet_int8_1b.bmodel

│ lprnet_int8_4b.bmodel

│

├─python #Python example required files

│ lprnet_bmcv.py

│ lprnet_opencv.py

│

└─tools #Tools possibly needed in development

compare_statis.py ##Compare test results

convert_imageset.py ##Convert dataset

eval_ccpd.py ##Accuracy measurement

export_onnx.py ##Export onnx model

LPRNet.py2. Running Steps

1. Python Examples

1.1 Configure Python Environment

bmcv Environment (for running lprnet_bmcv.py)

Modify .bashrc file to introduce Sophon's Python environment

sudo vim ~/.bashrcAdd the following at the end of the file

export PYTHONPATH=$PYTHONPATH:/opt/sophon/libsophon-current/lib:/opt/sophon/sophon-opencv-latest/opencv-python/Save and exit with :wq, then reload the terminal

source ~/.bashrcYou can check with echo $PYTHONPATH to see if it corresponds to the field.

opencv Environment (for running lprnet_opencv.py)

pip install opencv-python-headless1.2 Inference Testing

File Parameter Description

The command parameters for lprnet_opencv.py and lprnet_bmcv.py are the same. Taking lprnet_opencv.py inference as an example, the parameters are described as follows:

usage: lprnet_opencv.py [--input INPUT_PATH] [--bmodel BMODEL] [--dev_id DEV_ID]

--input: Test data path, can input the path of the entire image folder;

--bmodel: bmodel path for inference, default uses stage 0 network for inference;

--dev_id: TPU device id for inference;Image Testing

Image testing example is as follows. Supports testing the entire image folder. Enter the script directory, for example: /data/LPRNet/python/, then you can debug the required files.

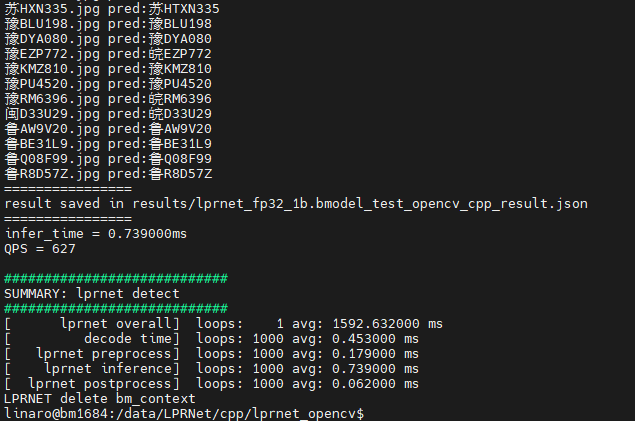

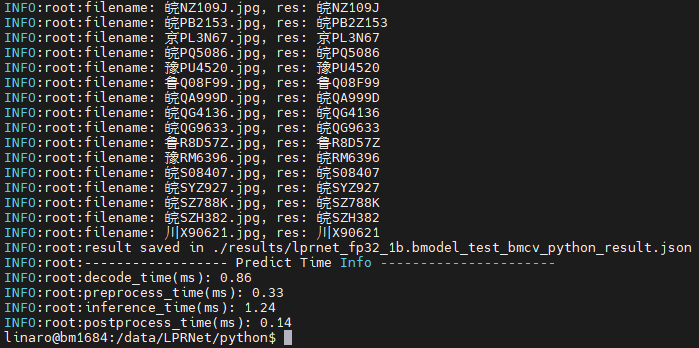

python3 lprnet_bmcv.py --input ../datasets/test --bmodel ../models/BM1684X/lprnet_fp32_1b.bmodel --dev_id 0After execution, predicted results will be saved in results/lprnet_fp32_1b.bmodel_test_bmcv_python_result.json, and predicted results, inference time and other information will be printed. Output is as follows:

2. C++ Examples

1. Cross-compilation Environment Setup

C++ programs need to compile dependency files to run on the board. To save pressure on edge devices, we choose to use an X86 Linux environment for cross-compilation.

Setting up cross-compilation environment, two methods provided:

(1) Install cross-compilation toolchain via apt:

If your system and target SoC platform have the same libc version (can be queried via ldd --version command), you can install using the following command:

sudo apt-get install gcc-aarch64-linux-gnu g++-aarch64-linux-gnuUninstall method:

sudo apt remove cpp-*-aarch64-linux-gnuIf your environment does not meet the above requirements, it is recommended to use method (2).

(2) Set up cross-compilation environment via docker:

You can use the provided docker image -- stream_dev.tar as the cross-compilation environment.

If using Docker for the first time, execute the following commands to install and configure (only required for first time):

sudo apt install docker.io

sudo systemctl start docker

sudo systemctl enable docker

sudo groupadd docker

sudo usermod -aG docker $USER

newgrp dockerLoad the image in the downloaded image directory

docker load -i stream_dev.tarYou can view loaded images via docker images, default is stream_dev:latest

Create container

docker run --privileged --name stream_dev -v $PWD:/workspace -it stream_dev:latest

# stream_dev is just an example name, please specify your own container nameThe workspace directory in the container will mount to the host directory where you run docker run. You can compile projects in this container. The workspace directory is under root, changes in this directory will map to changes in corresponding files in the local directory.

Note: When creating a container, you need to go to the parent directory of soc-sdk (dependency compilation environment) and above

1.2 Package Dependency Files

Package libsophon

Extract

libsophon_soc_x.y.z_aarch64.tar.gz, where x.y.z is the version number.# Create root directory for dependency files mkdir -p soc-sdk # Extract libsophon_soc_x.y.z_aarch64.tar.gz tar -zxf libsophon_soc_${x.y.z}_aarch64.tar.gz # Copy related library directories and header file directories to the dependency root directory cp -rf libsophon_soc_${x.y.z}_aarch64/opt/sophon/libsophon-${x.y.z}/lib soc-sdk cp -rf libsophon_soc_${x.y.z}_aarch64/opt/sophon/libsophon-${x.y.z}/include soc-sdkPackage sophon-ffmpeg and sophon-opencv

Extract

sophon-mw-soc_x.y.z_aarch64.tar.gz, where x.y.z is the version number.# Extract sophon-mw-soc_x.y.z_aarch64.tar.gz tar -zxf sophon-mw-soc_${x.y.z}_aarch64.tar.gz # Copy ffmpeg and opencv library directories and header file directories to soc-sdk directory cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-ffmpeg_${x.y.z}/lib soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-ffmpeg_${x.y.z}/include soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-opencv_${x.y.z}/lib soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-opencv_${x.y.z}/include soc-sdk

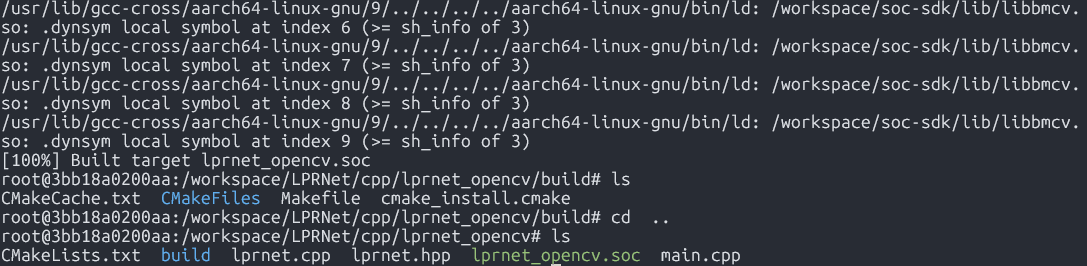

1.3 Perform Cross-compilation

After setting up the cross-compilation environment, use the cross-compilation toolchain to compile and generate executable files. lprnet_opencv and lprnet_bmcv have the same compilation method. Taking compiling the lprnet_opencv program as an example:

cd cpp/lprnet_opencv

mkdir build && cd build

#Please modify -DSDK path according to actual situation, use absolute path.

cmake -DTARGET_ARCH=soc -DSDK=/workspace/soc-sdk/ ..

makeAfter compilation completes, a .soc file will be generated in the corresponding directory, for example: cpp/lprnet_opencv/lprnet_opencv.soc.

2. Image Testing

You need to copy the executable files generated from cross-compilation and required models and test data to the SoC platform (i.e., BM1684X development board) for testing.

Parameter Description

Usage: lprnet_opencv.soc [params]

--bmodel (value:../../models/BM1684/lprnet_fp32_1b.bmodel)

bmodel file path

--dev_id (value:0)

TPU device id

--help (value:true)

print help information.

--input (value:../../datasets/test)

input path, images directoryImage Testing

Image testing example is as follows. Supports testing the entire image folder.

##First add executable permission to the file

chmod +x lprnet_opencv.soc

./lprnet_opencv.soc --input=../../datasets/test --bmodel=../../models/BM1684X/lprnet_fp32_1b.bmodel --dev_id=0After execution, predicted results will be saved in results/lprnet_fp32_1b.bmodel_test_opencv_cpp_result.json, and predicted results, inference time and other information will be printed. Output is as follows: