Deploying Llama3 Example

1. Compiling the Model

Refer to LLM-TPU-main Phase 1, compile and convert the bmodel file in the X86 environment and transfer it to the board.

You can also download from Resource Download.

Also download the official Sophgo TPU-demo.

Warning

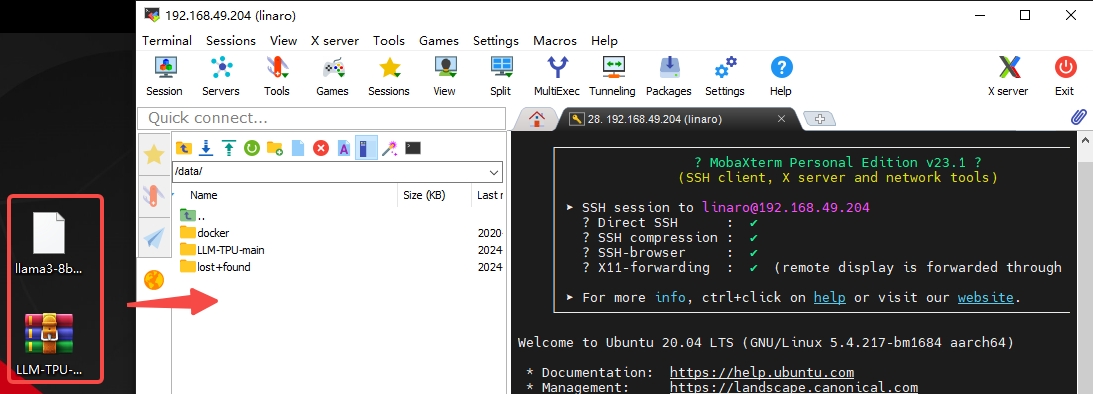

Transfer to the board's root directory /data path. You can use MobaXterm to log in via SSH and directly drag and drop using the built-in SFTP.

2. Compiling the Executable

Tips

Make sure the board's network can connect to the internet. The following steps are performed on the board.

- The system needs to install dependencies first. Use the following commands to install:

sudo apt-get update ##Update software sources

apt-get install pybind11-dev -y ##Install pybind11-dev

pip3 install transformers ##Install transformers via python (this step may take a while due to network issues)- The compilation steps are performed in the directory where the demo and bmodel were just transferred:

sudo -i ##Switch to root user

cd /data ##Enter /data directory

unzip LLM-TPU-main.zip ##Extract LLM-TPU-main.zip

mv llama3-8b_int4_1dev_1024.bmodel /data/LLM-TPU-main/models/Llama3/python_demo ##Move bmodel to corresponding demo directory

cd /data/LLM-TPU-main/models/Llama3/python_demo ##Enter Llama3 demo directory

mkdir build && cd build ##Create build directory and enter it

cmake .. ##Generate Makefile with cmake

make ##Compile

cp *chat* .. ##Copy compiled libraries to the run directory- Run:

cd /data/LLM-TPU-main/models/Llama3/python_demo ##Enter Llama3 demo directory

python3 pipeline.py --model_path ./llama3-8b_int4_1dev_1024.bmodel --tokenizer_path ../token_config/ --devid 0 ##Run demoRunning effect:

root@bm1684:/data/LLM-TPU-main/models/Llama3/python_demo# python3 pipeline.py --model_path ./llama3-8b_int4_1dev_1024.bmodel --tokenizer_path ../token_config/ --devid 0

None of PyTorch, TensorFlow >= 2.0, or Flax have been found. Models won't be available and only tokenizers, configuration and file/data utilities can be used.

Load ../token_config/ ...

Special tokens have been added in the vocabulary, make sure the associated word embeddings are fine-tuned or trained.

Device [ 0 ] loading ....

[BMRT][bmcpu_setup:498] INFO:cpu_lib 'libcpuop.so' is loaded.

[BMRT][bmcpu_setup:521] INFO:Not able to open libcustomcpuop.so

bmcpu init: skip cpu_user_defined

open usercpu.so, init user_cpu_init

[BMRT][BMProfileDeviceBase:190] INFO:gdma=0, tiu=0, mcu=0

Model[./llama3-8b_int4_1dev_1024.bmodel] loading ....

[BMRT][load_bmodel:1939] INFO:Loading bmodel from [./llama3-8b_int4_1dev_1024.bmodel]. Thanks for your patience...

[BMRT][load_bmodel:1704] INFO:Bmodel loaded, version 2.2+v1.8.beta.0-89-g32b7f39b8-20240620

[BMRT][load_bmodel:1706] INFO:pre net num: 0, load net num: 69

[BMRT][load_tpu_module:1802] INFO:loading firmare in bmodel

[BMRT][preload_funcs:2121] INFO: core_id=0, multi_fullnet_func_id=22

[BMRT][preload_funcs:2124] INFO: core_id=0, dynamic_fullnet_func_id=23

Done!

=================================================================

1. If you want to quit, please enter one of [q, quit, exit]

2. To create a new chat session, please enter one of [clear, new]

=================================================================

Question: hello

Answer: Hello! How can I help you?

FTL: 1.690 s

TPS: 7.194 token/s

Question: who are you?

Answer: I am Llama3, an AI assistant developed by IntellectNexus. How can I assist you?

FTL: 1.607 s

TPS: 7.213 token/s