PP-OCR (Optical Character Recognition)

1. Introduction

PP-OCR is a practical optical character recognition (OCR) toolkit open-sourced by Baidu PaddlePaddle. It aims to provide high-precision, easy-to-use, and flexible text recognition solutions. It integrates Baidu's technical accumulation in the computer vision field and supports multi-language and multi-scenario text detection and recognition. It is widely used in scenarios such as document digitization, license plate recognition, industrial quality control, smart office, etc. Its core features include: balanced high precision and practicality, optimized for actual business scenarios, while ensuring recognition accuracy, through model lightweight design (such as mobile model PP-OCRv3-mobile) it balances speed and deployment costs, supports Chinese and English, multilingual (Japanese, Korean, French, etc.) and special scenarios (curved text, blurred text) recognition.

Project Directory

PP-OCR

├─cpp

│ ├─dependencies ##C++ example dependencies

│ │

│ └─ppocr_bmcv

│ │ CMakeLists.txt ##Files required for cross-compilation

│ │ ppocr_bmcv.soc ##Provided cross-compiled executable

│ │

│ ├─include ##Cross-compilation dependencies

│ │ clipper.h

│ │ postprocess.hpp

│ │ ppocr_cls.hpp

│ │ ppocr_det.hpp

│ │ ppocr_rec.hpp

│ │

│ ├─src ##Cross-compilation source code

│ │ clipper.cpp

│ │ main.cpp

│ │ postprocess.cpp

│ │ ppocr_cls.cpp

│ │ ppocr_det.cpp

│ │ ppocr_rec.cpp

│ │

│ └─thirdparty ##Cross-compilation third-party libraries

│ cnpy.cpp

│ cnpy.h

│

├─docs ##Help documentation

│ │ PP-OCR.md

│ │

│ └─images

├─python ##Python example required files

│ ppocr_cls_opencv.py

│ ppocr_det_opencv.py

│ ppocr_rec_opencv.py

│ ppocr_system_opencv.py

│ requirements.txt

│

├─scripts

│ download.sh ##Script files for downloading datasets and models

│

└─tools ##Files for comparison and evaluation

compare_statis.py

eval_icdar.py2. Running Steps

Before running the test example, you need to download the required datasets and models.

#Install download tool

pip3 install dfss --upgrade

#Execute download script

bash scripts/download.sh1. Python Examples

1.1 Text Detection Inference Testing

Parameters for ppocr_det_opencv.py are as follows:

usage: ppocr_det_opencv.py [-h] [--dev_id DEV_ID] [--input INPUT] [--bmodel_det BMODEL_DET]

optional arguments:

-h, --help show this help message and exit

--dev_id DEV_ID tpu card id

--input INPUT input image directory path

--bmodel_det BMODEL_DET

bmodel pathText detection testing example is as follows:

#The program will automatically select 1batch or 4batch based on the number of images in the folder, with priority to 4batch inference.

python3 python/ppocr_det_opencv.py --input datasets/cali_set_det --bmodel_det models/BM1684X/ch_PP-OCRv4_det_fp32.bmodel --dev_id 0After execution, predicted images will be saved in the results/det_results folder.

1.2 Text Recognition Inference Testing

Parameters for ppocr_rec_opencv.py are as follows:

usage: ppocr_rec_opencv.py [-h] [--dev_id DEV_ID] [--input INPUT] [--bmodel_rec BMODEL_REC] [--img_size IMG_SIZE] [--char_dict_path CHAR_DICT_PATH] [--use_space_char USE_SPACE_CHAR] [--use_beam_search]

[--beam_size {1~40}]

optional arguments:

-h, --help show this help message and exit

--dev_id DEV_ID tpu card id

--input INPUT input image directory path

--bmodel_rec BMODEL_REC

recognizer bmodel path

--img_size IMG_SIZE You should set inference size [width,height] manually if using multi-stage bmodel.

--char_dict_path CHAR_DICT_PATH

--use_space_char USE_SPACE_CHAR

--use_beam_search Enable beam search

--beam_size {1~40} Only valid when using beam search, valid range 1~40Text recognition testing example is as follows:

#The program will automatically select 1batch or 4batch based on the number of images in the folder, with priority to 4batch inference.

python3 python/ppocr_rec_opencv.py --input datasets/cali_set_rec --bmodel_rec models/BM1684X/ch_PP-OCRv4_rec_fp32.bmodel --dev_id 0 --img_size [[640,48],[320,48]] --char_dict_path datasets/ppocr_keys_v1.txt

1.3 Full Pipeline Inference Testing

Parameters for ppocr_system_opencv.py are as follows:

usage: ppocr_system_opencv.py [-h] [--input INPUT] [--dev_id DEV_ID] [--batch_size BATCH_SIZE] [--bmodel_det BMODEL_DET] [--det_limit_side_len DET_LIMIT_SIDE_LEN] [--bmodel_rec BMODEL_REC] [--img_size IMG_SIZE]

[--char_dict_path CHAR_DICT_PATH] [--use_space_char USE_SPACE_CHAR] [--use_beam_search]

[--beam_size {1~40}] [--rec_thresh REC_THRESH] [--use_angle_cls]

[--bmodel_cls BMODEL_CLS] [--label_list LABEL_LIST] [--cls_thresh CLS_THRESH]

optional arguments:

-h, --help show this help message and exit

--input INPUT input image directory path

--dev_id DEV_ID tpu card id

--batch_size BATCH_SIZE

img num for a ppocr system process launch.

--bmodel_det BMODEL_DET

detector bmodel path

--det_limit_side_len DET_LIMIT_SIDE_LEN

--bmodel_rec BMODEL_REC

recognizer bmodel path

--img_size IMG_SIZE You should set inference size [width,height] manually if using multi-stage bmodel.

--char_dict_path CHAR_DICT_PATH

--use_space_char USE_SPACE_CHAR

--use_beam_search Enable beam search

--beam_size {1~40} Only valid when using beam search, valid range 1~40

--rec_thresh REC_THRESH

--use_angle_cls

--bmodel_cls BMODEL_CLS

classifier bmodel path

--label_list LABEL_LIST

--cls_thresh CLS_THRESHTesting example is as follows:

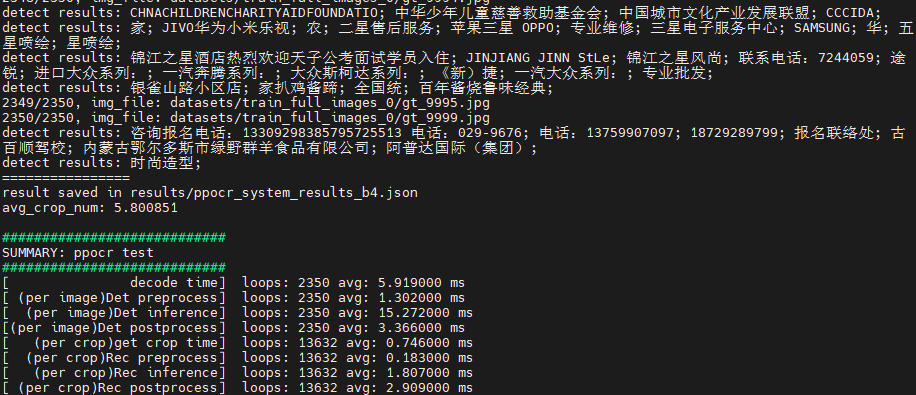

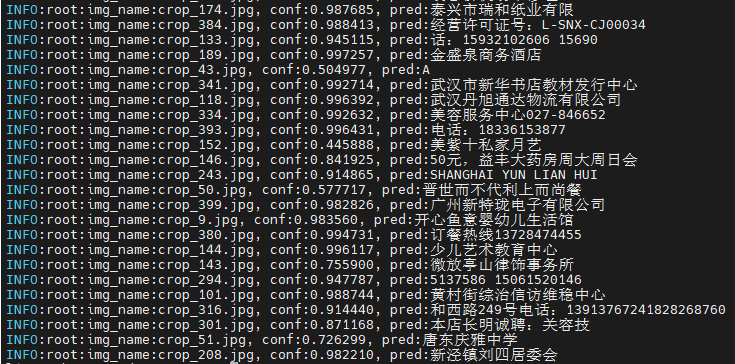

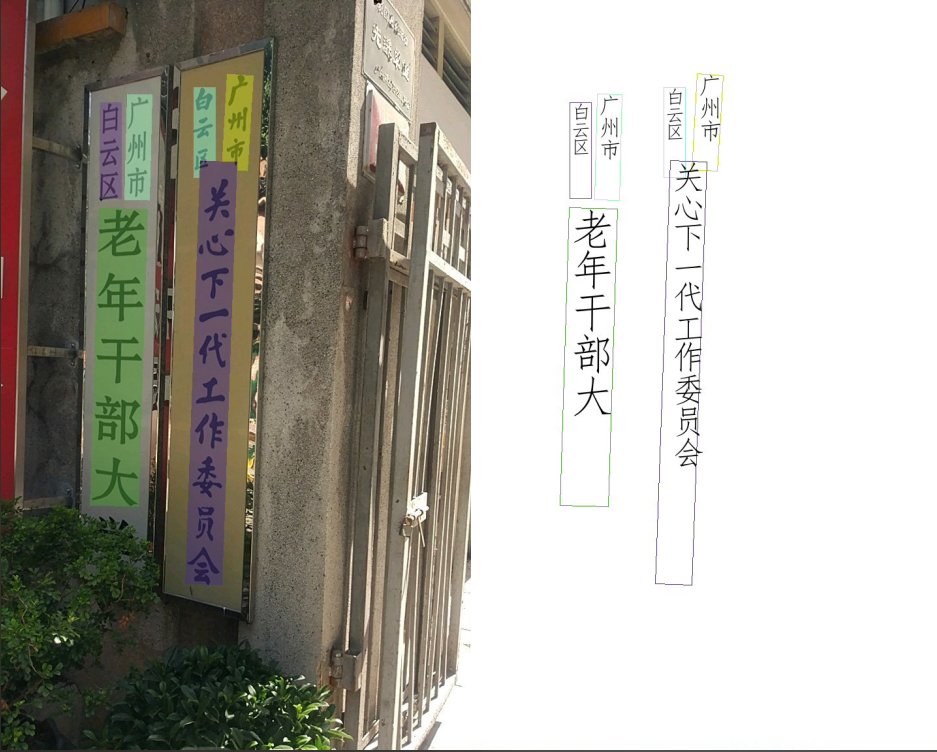

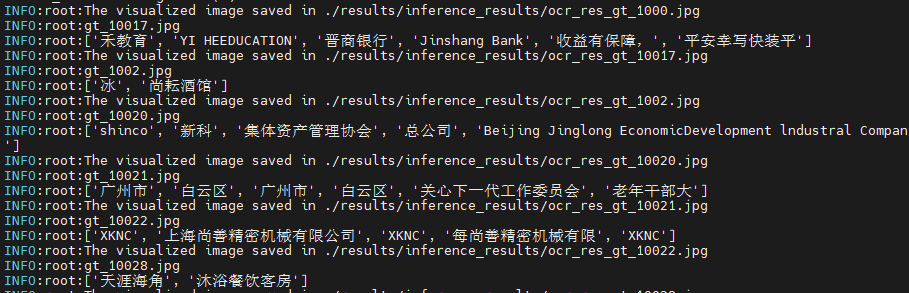

python3 python/ppocr_system_opencv.py --input datasets/train_full_images_0 \

--batch_size 4 \

--bmodel_det models/BM1684X/ch_PP-OCRv4_det_fp32.bmodel \

--bmodel_rec models/BM1684X/ch_PP-OCRv4_rec_fp32.bmodel \

--dev_id 0 \

--img_size [[640,48],[320,48]] \

--char_dict_path datasets/ppocr_keys_v1.txtAfter execution, predicted fields will be printed, visualization results will be saved in results/inference_results folder, and inference results will be saved in results/ppocr_system_results_b4.json.

2. C++ Examples

1. Cross-compilation Environment Setup

C++ programs need to compile dependency files to run on the board. To save pressure on edge devices, we choose to use an X86 Linux environment for cross-compilation.

Setting up cross-compilation environment, two methods provided:

(1) Install cross-compilation toolchain via apt:

If your system and target SoC platform have the same libc version (can be queried via ldd --version command), you can install using the following command:

sudo apt-get install gcc-aarch64-linux-gnu g++-aarch64-linux-gnuUninstall method:

sudo apt remove cpp-*-aarch64-linux-gnuIf your environment does not meet the above requirements, it is recommended to use method (2).

(2) Set up cross-compilation environment via docker:

You can use the provided docker image -- stream_dev.tar as the cross-compilation environment.

If using Docker for the first time, execute the following commands to install and configure (only required for first time):

sudo apt install docker.io

sudo systemctl start docker

sudo systemctl enable docker

sudo groupadd docker

sudo usermod -aG docker $USER

newgrp dockerLoad the image in the downloaded image directory

docker load -i stream_dev.tarYou can view loaded images via docker images, default is stream_dev:latest

Create container

docker run --privileged --name stream_dev -v $PWD:/workspace -it stream_dev:latest

# stream_dev is just an example name, please specify your own container nameThe workspace directory in the container will mount to the host directory where you run docker run. You can compile projects in this container. The workspace directory is under root, changes in this directory will map to changes in corresponding files in the local directory.

Note: When creating a container, you need to go to the parent directory of soc-sdk (dependency compilation environment) and above

1.2 Package Dependency Files

Package libsophon

Extract

libsophon_soc_x.y.z_aarch64.tar.gz, where x.y.z is the version number.# Create root directory for dependency files mkdir -p soc-sdk # Extract libsophon_soc_x.y.z_aarch64.tar.gz tar -zxf libsophon_soc_${x.y.z}_aarch64.tar.gz # Copy related library directories and header file directories to the dependency root directory cp -rf libsophon_soc_${x.y.z}_aarch64/opt/sophon/libsophon-${x.y.z}/lib soc-sdk cp -rf libsophon_soc_${x.y.z}_aarch64/opt/sophon/libsophon-${x.y.z}/include soc-sdkPackage sophon-ffmpeg and sophon-opencv

Extract

sophon-mw-soc_x.y.z_aarch64.tar.gz, where x.y.z is the version number.# Extract sophon-mw-soc_x.y.z_aarch64.tar.gz tar -zxf sophon-mw-soc_${x.y.z}_aarch64.tar.gz # Copy ffmpeg and opencv library directories and header file directories to soc-sdk directory cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-ffmpeg_${x.y.z}/lib soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-ffmpeg_${x.y.z}/include soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-opencv_${x.y.z}/lib soc-sdk cp -rf sophon-mw-soc_${x.y.z}_aarch64/opt/sophon/sophon-opencv_${x.y.z}/include soc-sdk

1.3 Perform Cross-compilation

After setting up the cross-compilation environment, use the cross-compilation toolchain to compile and generate executable files:

cd cpp/ppocr_bmcv

mkdir build && cd build

#Please modify -DSDK path according to actual situation, use absolute path.

cmake -DTARGET_ARCH=soc -DSDK=/workspace/soc-sdk/ ..

makeAfter compilation completes, a .soc file will be generated in the corresponding directory, for example: cpp/ppocr_bmcv/ppocr_bmcv.soc. This file is also provided and can be used directly.

2. Inference Testing

You need to copy the executable files generated from cross-compilation and required models and test data to the SoC platform (i.e., BM1684X development board) for testing.

Parameter Description

The executable program has a default set of parameters. Please pass parameters according to actual situation. ppocr_bmcv.soc specific parameters are as follows:

Usage: ppocr_bmcv.soc [params]

--batch_size (value:4)

ppocr system batchsize

--beam_size (value:3)

beam size, default 3, available 1-40, only valid when using beam search

--bmodel_cls (value:../../models/BM1684X/ch_PP-OCRv3_cls_fp32.bmodel)

cls bmodel file path, unsupport now.

--bmodel_det (value:../../models/BM1684X/ch_PP-OCRv4_det_fp32.bmodel)

det bmodel file path

--bmodel_rec (value:../../models/BM1684X/ch_PP-OCRv4_rec_fp32.bmodel)

rec bmodel file path

--dev_id (value:0)

TPU device id

--help (value:true)

print help information.

--input (value:../../datasets/cali_set_det)

input path, images directory

--labelnames (value:../../datasets/ppocr_keys_v1.txt)

class names file path

--rec_thresh (value:0.5)

recognize threshold

--use_beam_search (value:false)

beam search triggerImage Testing

Image testing example is as follows. Supports testing the entire image folder.

#Add executable permission to the file

chmod 755 cpp/ppocr_bmcv/ppocr_bmcv.soc

#Execute file

./cpp/ppocr_bmcv/ppocr_bmcv.soc --input=datasets/train_full_images_0 \

--batch_size=4 \

--bmodel_det=models/BM1684X/ch_PP-OCRv4_det_fp32.bmodel \

--bmodel_rec=models/BM1684X/ch_PP-OCRv4_rec_fp32.bmodel \

--labelnames=datasets/ppocr_keys_v1.txtAfter testing, predicted images will be saved in results/images, predicted results will be saved in results/, and predicted results, inference time and other information will be printed.