Machine Vision Technology Development

Experiment 3 - Gesture Recognition Experience

Step 1: System Preparation

sudo apt update && sudo apt upgrade -ysudo apt install -y build-essential cmake pkg-config python3-dev python3-pip (can skip if python3 is already installed)Step 2: Create Virtual Environment

cd OPENCV

python3 -m venv rdkx5_vision_env

source rdkx5_vision_env/bin/activateStep 3: Install Dependencies

pip install --upgrade pip

pip install -r requirements.txtStep 4: Test Environment

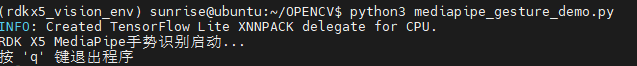

python3 mediapipe_gesture_demo.py

Sample program includes the following features:

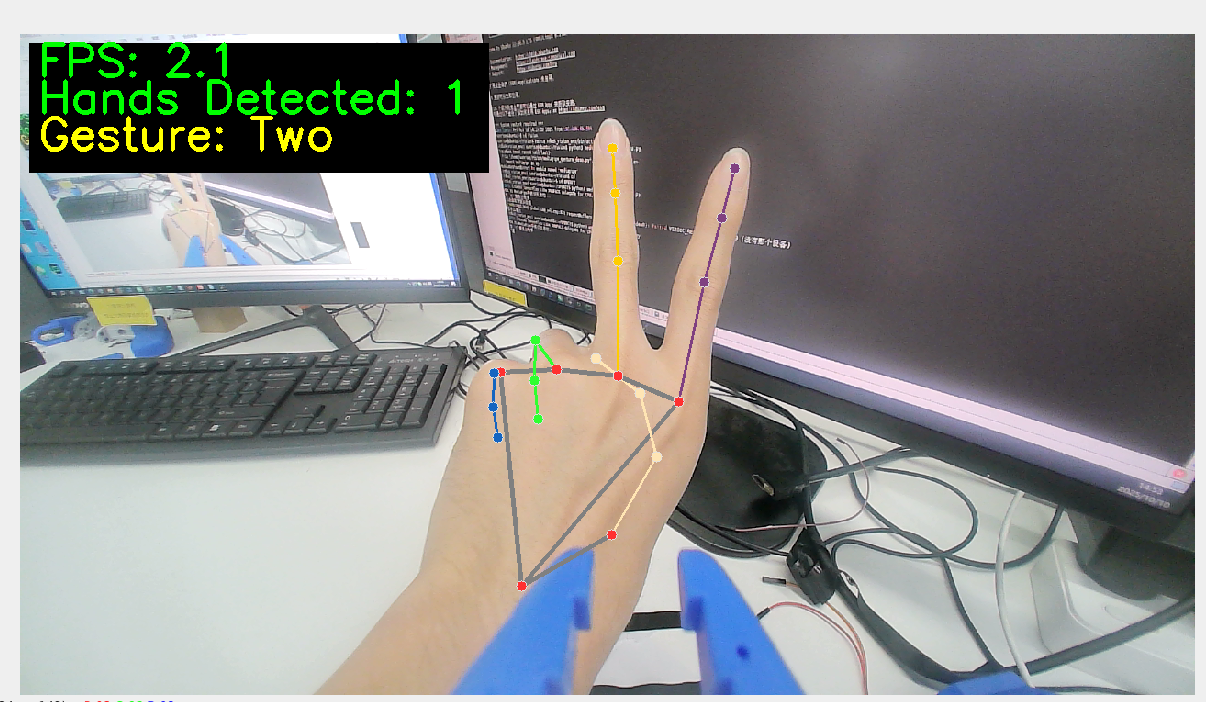

- ✅ Real-time gesture detection - supports simultaneous detection of both hands

- ✅ Number gesture recognition - recognizes finger counts 1-5

- ✅ Special gesture recognition - OK gesture, thumbs up gesture

- ✅ Performance monitoring - real-time FPS display

- ✅ Visual feedback - hand keypoint drawing

import cv2

import numpy as np

import math

import time

# Initialize camera

cap = cv2.VideoCapture(0)

# Set window size

window_width = 1280

window_height = 720

# Adjust camera resolution

cap.set(cv2.CAP_PROP_FRAME_WIDTH, window_width)

cap.set(cv2.CAP_PROP_FRAME_HEIGHT, window_height)

# Create window

cv2.namedWindow('Hand Gesture Recognition', cv2.WINDOW_NORMAL)

cv2.resizeWindow('Hand Gesture Recognition', window_width, window_height)

# Create slider window for adjusting skin color threshold

cv2.namedWindow('Skin Detection Controls')

cv2.resizeWindow('Skin Detection Controls', 400, 250)

# Create HSV threshold sliders for skin detection

cv2.createTrackbar('H_min', 'Skin Detection Controls', 0, 179, lambda x: None)

cv2.createTrackbar('H_max', 'Skin Detection Controls', 20, 179, lambda x: None)

cv2.createTrackbar('S_min', 'Skin Detection Controls', 30, 255, lambda x: None)

cv2.createTrackbar('S_max', 'Skin Detection Controls', 150, 255, lambda x: None)

cv2.createTrackbar('V_min', 'Skin Detection Controls', 60, 255, lambda x: None)

cv2.createTrackbar('V_max', 'Skin Detection Controls', 255, 255, lambda x: None)

# Set default values

cv2.setTrackbarPos('H_min', 'Skin Detection Controls', 0)

cv2.setTrackbarPos('H_max', 'Skin Detection Controls', 20)

cv2.setTrackbarPos('S_min', 'Skin Detection Controls', 30)

cv2.setTrackbarPos('S_max', 'Skin Detection Controls', 150)

cv2.setTrackbarPos('V_min', 'Skin Detection Controls', 60)

cv2.setTrackbarPos('V_max', 'Skin Detection Controls', 255)

# Function to count fingers

def count_fingers(contour, drawing):

# Calculate convex hull

hull = cv2.convexHull(contour, returnPoints=False)

# If too few hull points, cannot calculate defects

if len(hull) < 3:

return 0

# Calculate convexity defects

defects = cv2.convexityDefects(contour, hull)

if defects is None:

return 0

# Count valid convexity defects (gaps between fingers)

finger_count = 0

for i in range(defects.shape[0]):

s, e, f, d = defects[i, 0]

start = tuple(contour[s][0])

end = tuple(contour[e][0])

far = tuple(contour[f][0])

# Calculate three sides of triangle

a = math.sqrt((end[0] - start[0]) ** 2 + (end[1] - start[1]) ** 2)

b = math.sqrt((far[0] - start[0]) ** 2 + (far[1] - start[1]) ** 2)

c = math.sqrt((end[0] - far[0]) ** 2 + (end[1] - far[1]) ** 2)

# Use cosine theorem to calculate angle

angle = math.degrees(math.acos((b ** 2 + c ** 2 - a ** 2) / (2 * b * c)))

# If angle is less than 90 degrees, consider it as gap between fingers

if angle <= 90:

# Mark defect point on image

cv2.circle(drawing, far, 5, [0, 0, 255], -1)

finger_count += 1

# Defect count plus 1 equals finger count (because defects are gaps between fingers)

return finger_count + 1

# Main loop

while cap.isOpened():

success, image = cap.read()

if not success:

print("Unable to get camera frame")

break

# Flip image horizontally to look more like a mirror

image = cv2.flip(image, 1)

# Create a copy for drawing

drawing = image.copy()

# Convert to HSV color space

hsv = cv2.cvtColor(image, cv2.COLOR_BGR2HSV)

# Get current skin color threshold

h_min = cv2.getTrackbarPos('H_min', 'Skin Detection Controls')

h_max = cv2.getTrackbarPos('H_max', 'Skin Detection Controls')

s_min = cv2.getTrackbarPos('S_min', 'Skin Detection Controls')

s_max = cv2.getTrackbarPos('S_max', 'Skin Detection Controls')

v_min = cv2.getTrackbarPos('V_min', 'Skin Detection Controls')

v_max = cv2.getTrackbarPos('V_max', 'Skin Detection Controls')

# Create skin color mask

lower_skin = np.array([h_min, s_min, v_min])

upper_skin = np.array([h_max, s_max, v_max])

mask = cv2.inRange(hsv, lower_skin, upper_skin)

# Perform morphological operations to remove noise

kernel = np.ones((5, 5), np.uint8)

mask = cv2.erode(mask, kernel, iterations=1)

mask = cv2.dilate(mask, kernel, iterations=2)

mask = cv2.GaussianBlur(mask, (5, 5), 0)

# Find contours

contours, _ = cv2.findContours(mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

# Find the largest contour (assumed to be hand)

if contours:

max_contour = max(contours, key=cv2.contourArea)

# Only process contours large enough

if cv2.contourArea(max_contour) > 5000:

# Draw contour

cv2.drawContours(drawing, [max_contour], 0, (0, 255, 0), 2)

# Calculate and display finger count

finger_count = count_fingers(max_contour, drawing)

# Limit finger count between 1-5

finger_count = max(1, min(5, finger_count))

# Display number on image

cv2.putText(

drawing,

f"Fingers: {finger_count}",

(50, 50),

cv2.FONT_HERSHEY_SIMPLEX,

1,

(0, 255, 0),

2,

cv2.LINE_AA

)

# Display skin detection result

cv2.imshow('Skin Detection', mask)

# Display final result

cv2.imshow('Hand Gesture Recognition', drawing)

# Display usage instructions

cv2.putText(

drawing,

"Adjust sliders to detect skin color properly",

(10, drawing.shape[0] - 40),

cv2.FONT_HERSHEY_SIMPLEX,

0.5,

(0, 0, 255),

1,

cv2.LINE_AA

)

cv2.putText(

drawing,

"Press 'q' to quit",

(10, drawing.shape[0] - 10),

cv2.FONT_HERSHEY_SIMPLEX,

0.5,

(0, 0, 255),

1,

cv2.LINE_AA

)

# Press 'q' to quit

if cv2.waitKey(5) & 0xFF == ord('q'):

break

# Release resources

cap.release()

cv2.destroyAllWindows()