01-ShiMetaPi Hybrid vision SDK Introduction

Language: English | 中文

📋 Overview

HVAlgo is a high-performance algorithm library designed by ShiMetaPi specifically for Event Cameras, providing a variety of advanced event denoising, computer vision, and image restoration algorithms. This library is built based on ShiMetaPi Hybird Vision SDK, supports real-time event stream processing, and is suitable for application scenarios such as robot vision, autonomous driving, and high-speed motion analysis.

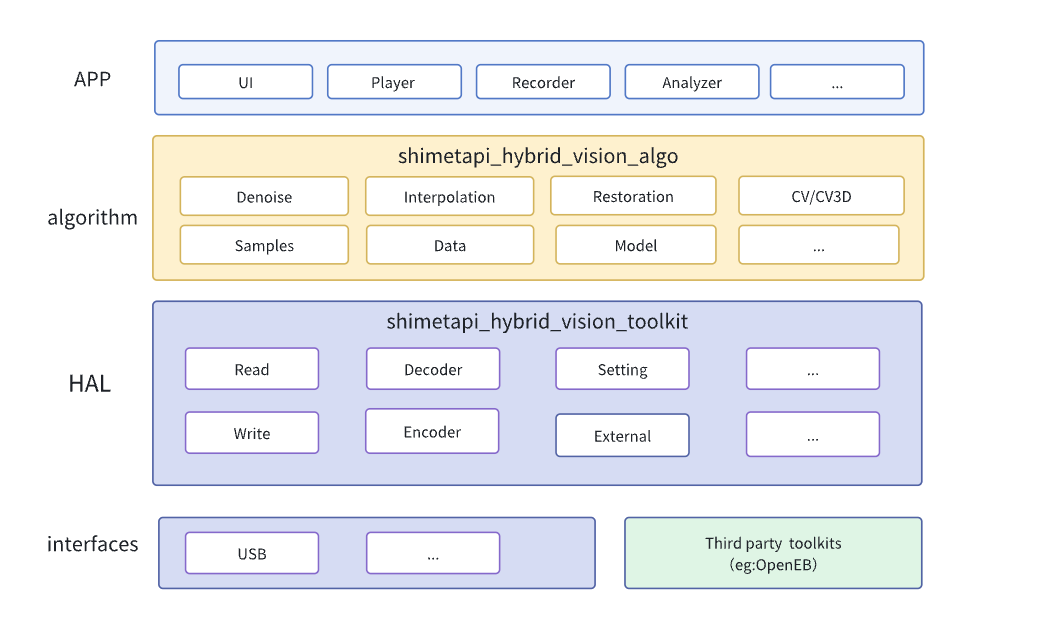

ShiMetaPi Hybrid Vision SDK consists of two independent SDKs: hybrid_vision_toolkit and hybrid_vision_algo, which implement interface control and algorithm processing of visual fusion cameras respectively.

📋 SDK Overall Architecture

shimetapi_Hybrid_vision_algo (Algorithm Layer SDK)

- Positioning: This is the core algorithm processing layer of the SDK, located in the middle layer of the architecture (yellow part).

- Core Function: Focuses on processing raw data streams from event cameras, executing advanced computer vision algorithms to improve data quality, extract useful information, or perform 3D understanding.

- Included Modules:

- Denoise (

Denoise): Removes noise from event streams to improve signal quality. - Interpolation (

Interpolation): Used to generate intermediate data points between events, or perform spatiotemporal alignment/fusion with standard frame images. - Restoration (

Restoration): Used to handle missing or abnormal data. - Computer Vision/3D Vision (

CV/CV3D): Contains algorithms implementing event camera specific or fusion applications, such as object detection, tracking, 3D reconstruction, pose estimation, etc. - Samples and Data (

Samples/data): Provides example code, models, or necessary datasets. - External Dependencies (

external): Provides users with more device choices by integrating third-party libraries, for example:shimetapi Hybrid vision toolkit,OpenEb(installed via command), etc.

- Denoise (

- Role: It provides processed, enhanced, or understood event data information to the upper-layer application (

APP). Application developers mainly call the interfaces of this layer to realize advanced functions of event cameras (such as playback, recording, analysis).

shimetapi_Hybrid_vision_toolkit (HAL Layer Toolkit)

- Positioning: This is the Hardware Abstraction Layer (HAL) and basic tool layer of the SDK, located below the algorithm layer (dark blue part).

- Core Function: Provides functions for interacting with hardware and basic data stream operations. It is responsible for reading raw data from physical interfaces, performing preliminary processing (such as encoding/decoding), passing data to the upper algorithm layer, and also receiving upper-layer instructions to control hardware.

- Key Modules/Functions Included:

- Read (

Read): Acquire raw event data streams from hardware interfaces (such as USB). - Write (

Write): Used to send processed data or control instructions back to hardware (if supported). - Setting (

Setting): Configure camera parameters (such as resolution, sensitivity, etc.). - Decoder (

Decoder): If event data is transmitted in specific encoding, responsible for decoding it into a processable format. - Encoder (

Encoder): Used to encode processed data or recorded streams into a specific format.

- Read (

- Role: It abstracts the details of the underlying hardware, providing a unified, relatively hardware-independent interface for the upper algorithm layer (

shimetapi_Hybrid_vision_algo) to access and control event camera data streams. It handles underlying communication protocols, data transport, and basic format conversion.

Summary of Relationship and Collaboration:

shimetapi_Hybrid_vision_toolkit (Toolkit/HAL Layer):- Directly interacts with hardware and underlying system SDKs.

- Responsible for acquiring raw data (

Read), performing basic format conversion (Decoder), controlling hardware (Setting), and outputting data (Write,Encoder), etc. - Provides standardized data access interfaces to the upper layer (

algolayer).

shimetapi_Hybrid_vision_algo (Algorithm Layer):- Built on top of the

toolkitlayer. - Receives standardized event data streams from the

toolkitlayer. - Uses its advanced algorithm modules (

Denoise,Interpolation,CV/CV3D, etc.) to process, enhance, analyze, and understand the data. - Provides processed, more valuable information to the top-layer application (

APP) (such as displayUI,Playerplayback,Recorderrecording,Analyzeranalysis). - Compatible with different manufacturer interfaces (currently supports ShiMetaPi HV Toolkit and Openeb).

- Built on top of the