Voice LLM Application - Experiment 01: Voice Recognition

Experiment Preparation:

- Install ALSA tools (for recording and playback)

sudo apt-get install alsa-utils

- Install required dependencies

pip install -r requirements.txtpython -m pip install websocket-clientsudo apt-get update && sudo apt-get install -y ffmpeg

Register iFlytek account

Login to iFlytek Open Platform: https://www.xfyun.com.cn

Click to enter console

Register and login

Create application

Enable speech recognition - speech dictation service

Get API ID, API Secret, API Key, and speech dictation interface URL

Save the four pieces of information (to be filled in config.py later)

Experiment Steps:

- Check voice module (ensure voice module is connected to RDK mainboard and speaker)

Terminal: arecord -l # Identify microphone card number and device number

Terminal: aplay -l # Check speaker/output devices

Terminal: sudo arecord -f S16_LE -r 16000 -c 1 -d 5 /tmp/test_mic.wav # Use default device to record for 5 seconds

Terminal: aplay /tmp/test_mic.wav # Play audio

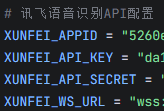

- Fill in API ID, API Secret, API Key, and speech dictation interface URL in config.py

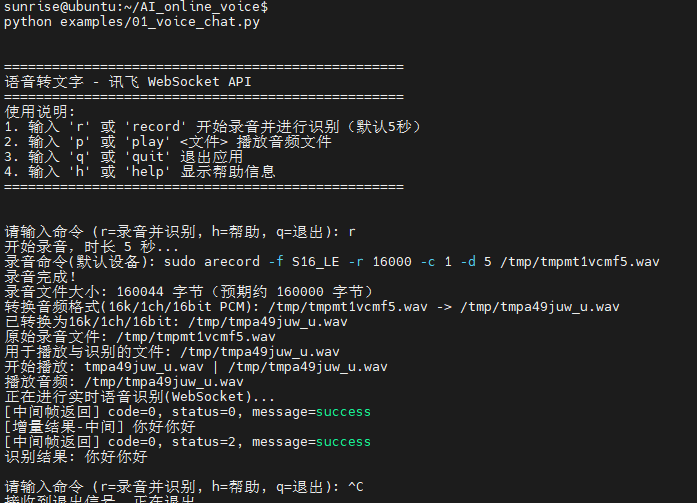

cd AI_online_voice# Enter packagepython examples/01_voice_chat.py# Run example program, enter r to start testing

Terminal operation effect:

Experiment Effect: Start recording (default 5 seconds), after recording play audio, upload audio to iFlytek speech dictation large model, return recognition result to Linux terminal