Machine Vision Technology Development

Experiment 2 - Color Recognition Detection

pip install opencv-python # Download open-cv database(Also need to install python3 yourself, can skip if already downloaded)cd OPENCV# Open OPENCV packagesudo python3 ./color_detection.py# Run py file

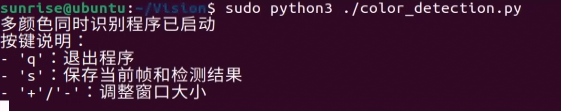

Terminal displays:

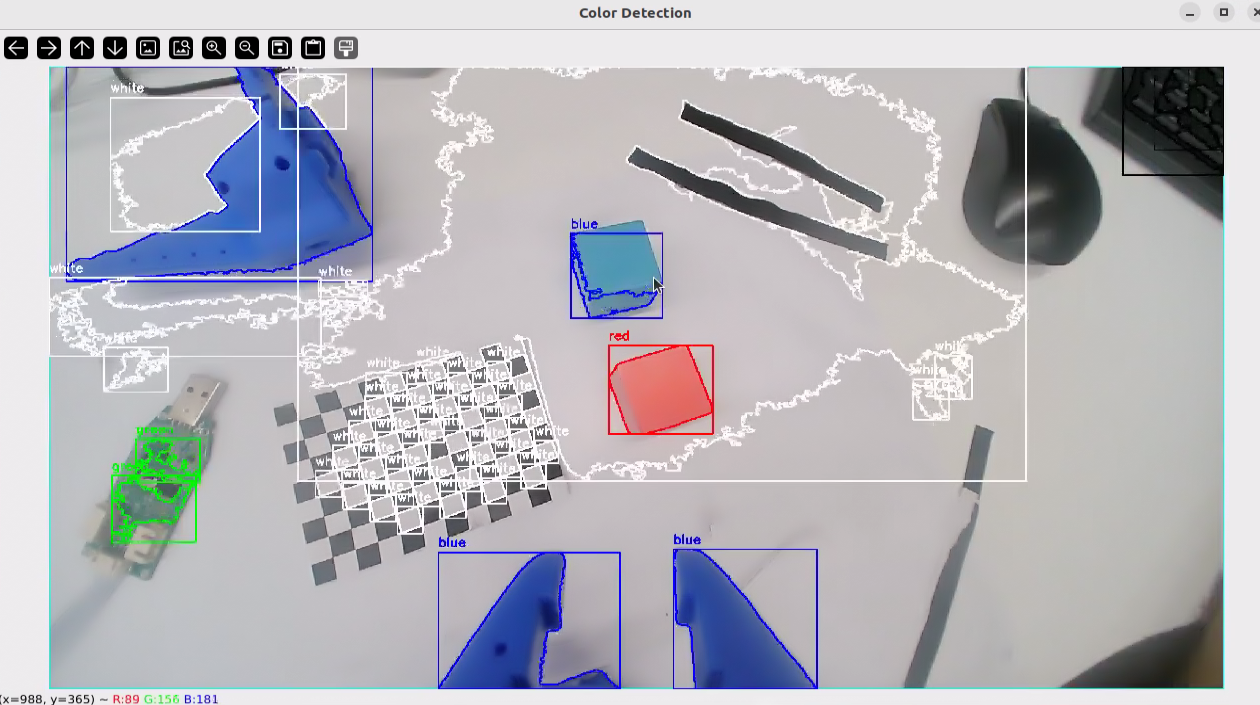

At this time, the Linux system will display the camera's real-time video. We need to test the keys under the window focus, the effect is as follows:

#!/usr/bin/env python

# -*- coding: utf-8 -*-

"""

Multi-color simultaneous recognition program

Function: Real-time recognition of multiple color objects in camera

"""

import cv2

import numpy as np

import sys

import os

import argparse

def main():

"""

Main function: Open camera and perform multi-color simultaneous recognition

"""

# Parse command line arguments

parser = argparse.ArgumentParser(description='Multi-color simultaneous recognition program')

parser.add_argument('--width', type=int, default=2560, help='Display window width')

parser.add_argument('--height', type=int, default=1440, help='Display window height')

args = parser.parse_args()

# Open default camera

cap = cv2.VideoCapture(0)

# Check if camera opened successfully

if not cap.isOpened():

print("Error: Unable to open camera")

sys.exit(1)

# Set camera resolution

cap.set(cv2.CAP_PROP_FRAME_WIDTH, args.width)

cap.set(cv2.CAP_PROP_FRAME_HEIGHT, args.height)

# Create windows and set sizes

cv2.namedWindow('Original', cv2.WINDOW_NORMAL)

cv2.namedWindow('Color Detection', cv2.WINDOW_NORMAL)

cv2.namedWindow('Controls', cv2.WINDOW_NORMAL)

# Set window sizes

cv2.resizeWindow('Original', args.width // 2, args.height // 2)

cv2.resizeWindow('Color Detection', args.width // 2, args.height // 2)

cv2.resizeWindow('Controls', 600, 300)

# Create HSV color range sliders

cv2.createTrackbar('H_min', 'Controls', 0, 179, lambda x: None)

cv2.createTrackbar('H_max', 'Controls', 179, 179, lambda x: None)

cv2.createTrackbar('S_min', 'Controls', 0, 255, lambda x: None)

cv2.createTrackbar('S_max', 'Controls', 255, 255, lambda x: None)

cv2.createTrackbar('V_min', 'Controls', 0, 255, lambda x: None)

cv2.createTrackbar('V_max', 'Controls', 255, 255, lambda x: None)

# Define color ranges and corresponding color names and display colors

color_ranges = {

'red': {

'ranges': [(0, 50, 50), (10, 255, 255), (160, 50, 50), (179, 255, 255)], # Red has two ranges

'color': (0, 0, 255) # BGR format: Blue=0, Green=0, Red=255

},

'green': {

'ranges': [(35, 50, 50), (85, 255, 255)],

'color': (0, 255, 0) # BGR format: Blue=0, Green=255, Red=0

},

'blue': {

'ranges': [(100, 50, 50), (130, 255, 255)],

'color': (255, 0, 0) # BGR format: Blue=255, Green=0, Red=0

},

'yellow': {

'ranges': [(20, 100, 100), (30, 255, 255)],

'color': (0, 255, 255) # BGR format: Blue=0, Green=255, Red=255

},

'white': {

'ranges': [(0, 0, 200), (180, 30, 255)],

'color': (255, 255, 255) # BGR format: Blue=255, Green=255, Red=255

},

'black': {

'ranges': [(0, 0, 0), (180, 255, 30)],

'color': (0, 0, 0) # BGR format: Blue=0, Green=0, Red=0

}

}

# Set initial slider positions for custom color

cv2.setTrackbarPos('H_min', 'Controls', 0)

cv2.setTrackbarPos('S_min', 'Controls', 0)

cv2.setTrackbarPos('V_min', 'Controls', 0)

cv2.setTrackbarPos('H_max', 'Controls', 179)

cv2.setTrackbarPos('S_max', 'Controls', 255)

cv2.setTrackbarPos('V_max', 'Controls', 255)

print("Multi-color simultaneous recognition program started")

print("Key instructions:")

print("- 'q': Exit program")

print("- 's': Save current frame and detection results")

print("- '+'/'-': Adjust window size")

# Loop to read camera frames

while True:

# Read a frame

ret, frame = cap.read()

# If failed to read, exit loop

if not ret:

print("Error: Unable to read camera frame")

break

# Convert to HSV color space

hsv = cv2.cvtColor(frame, cv2.COLOR_BGR2HSV)

# Get current slider values (for custom color detection)

h_min = cv2.getTrackbarPos('H_min', 'Controls')

h_max = cv2.getTrackbarPos('H_max', 'Controls')

s_min = cv2.getTrackbarPos('S_min', 'Controls')

s_max = cv2.getTrackbarPos('S_max', 'Controls')

v_min = cv2.getTrackbarPos('V_min', 'Controls')

v_max = cv2.getTrackbarPos('V_max', 'Controls')

# Create custom color mask

custom_lower = np.array([h_min, s_min, v_min])

custom_upper = np.array([h_max, s_max, v_max])

custom_mask = cv2.inRange(hsv, custom_lower, custom_upper)

# Create detection result image

detection_frame = frame.copy()

# Process custom color

contours, _ = cv2.findContours(custom_mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

for contour in contours:

area = cv2.contourArea(contour)

if area < 500: # Ignore too small contours

continue

# Draw contour

cv2.drawContours(detection_frame, [contour], -1, (255, 255, 0), 2) # Cyan

# Calculate bounding rectangle

x, y, w, h = cv2.boundingRect(contour)

# Display "Custom" above rectangle

cv2.putText(detection_frame, "Custom", (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, (255, 255, 0), 2)

# Draw rectangle

cv2.rectangle(detection_frame, (x, y), (x + w, y + h), (255, 255, 0), 2)

# Detect each predefined color

for color_name, color_info in color_ranges.items():

# Create mask

if color_name == 'red': # Red needs special treatment (two ranges)

lower1 = np.array(color_info['ranges'][0])

upper1 = np.array(color_info['ranges'][1])

lower2 = np.array(color_info['ranges'][2])

upper2 = np.array(color_info['ranges'][3])

mask1 = cv2.inRange(hsv, lower1, upper1)

mask2 = cv2.inRange(hsv, lower2, upper2)

color_mask = cv2.bitwise_or(mask1, mask2)

else:

lower = np.array(color_info['ranges'][0])

upper = np.array(color_info['ranges'][1])

color_mask = cv2.inRange(hsv, lower, upper)

# Find contours

contours, _ = cv2.findContours(color_mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

# Process contours

for contour in contours:

area = cv2.contourArea(contour)

if area < 500: # Ignore too small contours

continue

# Draw contour

cv2.drawContours(detection_frame, [contour], -1, color_info['color'], 2)

# Calculate bounding rectangle

x, y, w, h = cv2.boundingRect(contour)

# Display color name above rectangle

cv2.putText(detection_frame, color_name, (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.7, color_info['color'], 2)

# Draw rectangle

cv2.rectangle(detection_frame, (x, y), (x + w, y + h), color_info['color'], 2)

# Display images

cv2.imshow('Original', frame)

cv2.imshow('Color Detection', detection_frame)

# Wait for key press

key = cv2.waitKey(30) & 0xFF

# Handle key press

if key == ord('q'):

print("User exited program")

break

elif key == ord('s'):

# Create save directory

save_dir = "color_detection_images"

if not os.path.exists(save_dir):

os.makedirs(save_dir)

# Generate file names

import time

timestamp = time.strftime("%Y%m%d_%H%M%S")

original_filename = os.path.join(save_dir, f"original_{timestamp}.jpg")

detection_filename = os.path.join(save_dir, f"detection_{timestamp}.jpg")

# Save images

cv2.imwrite(original_filename, frame)

cv2.imwrite(detection_filename, detection_frame)

print(f"Saved images: {original_filename}, {detection_filename}")

elif key == ord('+') or key == ord('='): # '=' and '+' are usually on the same key

# Increase window size

current_width = cv2.getWindowImageRect('Color Detection')[2]

current_height = cv2.getWindowImageRect('Color Detection')[3]

new_width = int(current_width * 1.1)

new_height = int(current_height * 1.1)

cv2.resizeWindow('Original', new_width, new_height)

cv2.resizeWindow('Color Detection', new_width, new_height)

print(f"Window size increased to: {new_width}x{new_height}")

elif key == ord('-'):

# Decrease window size

current_width = cv2.getWindowImageRect('Color Detection')[2]

current_height = cv2.getWindowImageRect('Color Detection')[3]

new_width = int(current_width * 0.9)

new_height = int(current_height * 0.9)

cv2.resizeWindow('Original', new_width, new_height)

cv2.resizeWindow('Color Detection', new_width, new_height)

print(f"Window size decreased to: {new_width}x{new_height}")

# Release resources

cap.release()

cv2.destroyAllWindows()

print("Program exited")

if __name__ == "__main__":

try:

main()

except Exception as e:

print(f"Program error: {e}")

sys.exit(1)