01 RK3568 NPU Overview

1 What is NPU

1.1 Basic Concept of NPU

NPU (Neural Processing Unit) is a dedicated processor designed for artificial intelligence and machine learning tasks. Compared with traditional CPUs and GPUs, NPU has higher energy efficiency and lower power consumption when executing neural network inference tasks.

1.2 Technical Features of NPU

Dedicated Architecture Design

- Parallel Computing Capability: Optimized for neural network matrix operations

- Low Power Design: Lower power consumption in inference tasks compared to GPU

- Efficient Memory Access: Optimized memory hierarchy reduces data movement

- Fixed-Point Operation Support: Supports low-precision operations such as INT8/INT16, improving inference speed

Application Scenarios

- Computer Vision: Image classification, object detection, face recognition

- Natural Language Processing: Speech recognition, text analysis

- Intelligent Control: Industrial automation, robot control

- Edge Computing: IoT devices, intelligent monitoring

1.3 NPU vs CPU/GPU Comparison

| Feature | CPU | GPU | NPU |

|---|---|---|---|

| Architecture | General Computing | Parallel Computing | AI Dedicated Computing |

| Inference Performance | Low | Medium | High |

| Power Efficiency | Low | Medium | High |

| Programming Complexity | Simple | Medium | Simple (Framework Support) |

| Applicable Scenarios | General Tasks | Graphics/Parallel Computing | AI Inference |

2 RK3568 NPU Performance Indicators

2.1 Hardware Specifications

Basic Parameters

- NPU Model: Rockchip Self-developed NPU

- Computing Performance: 0.8 TOPS (INT8)

- Supported Precision: INT8, INT16, FP16, BFP16

- Memory Bandwidth: Shared System Memory

- Operating Frequency: Up to 600MHz

Architecture Features

RK3568 NPU Architecture

├── Computing Unit

│ ├── Matrix Multiplication Unit (MAC Array)

│ ├── Activation Function Unit (Activation)

│ └── Pooling Unit (Pooling)

├── Memory Subsystem

│ ├── On-chip Cache (On-chip Cache)

│ ├── DMA Controller

│ └── Memory Management Unit (MMU)

└── Control Unit

├── Instruction Decoder

├── Scheduler

└── Interrupt Controller2.2 Performance Benchmark

Typical Model Performance (INT8)

| Model | Input Size | Inference Time | FPS | Accuracy Loss |

|---|---|---|---|---|

| MobileNetV2 | 224x224x3 | ~15ms | ~66 | < 1% |

| YOLOv5s | 640x640x3 | ~180ms | ~5.5 | < 2% |

| ResNet50 | 224x224x3 | ~45ms | ~22 | < 1% |

| EfficientNet-B0 | 224x224x3 | ~25ms | ~40 | < 1% |

Power Consumption Characteristics

- Peak Power Consumption: About 1.2W

- Average Power Consumption: About 0.8W (Typical inference task)

- Standby Power Consumption: < 10mW

- Power Efficiency: About 667 GOPS/W

2.3 Supported Operators

① Convolution Operators

These operators are the core of deep learning, especially computer vision tasks, used to extract features from input data (such as images).

- Conv2D (Standard Convolution)

- DepthwiseConv2D (Depthwise Separable Convolution)

- TransposeConv2D (Transposed Convolution)

- DilatedConv2D (Dilated Convolution)- Conv2D: Standard convolution operation, uses a learnable convolution kernel (filter) to perform sliding window calculations on the input feature map, thereby extracting local features such as edges and textures.

- DepthwiseConv2D: Decomposes standard convolution into two steps: Depthwise Convolution (independent convolution for each input channel) and Pointwise Convolution (1x1 convolution, used to combine channel information). This structure can significantly reduce the amount of calculation and the number of parameters.

- TransposeConv2D: Can be seen as the "inverse process" of standard convolution. It can upsample (enlarge) a small-sized feature map to a larger-sized feature map.

- DilatedConv2D: Inserts "holes" (zero values) between the elements of the standard convolution kernel, thereby expanding the receptive field of the convolution kernel and capturing broader context information without increasing the number of parameters and calculations.

② Pooling and Normalization

These operators are mainly used for dimensionality reduction, maintaining translation invariance (such as image classification tasks), and stabilizing the training process.

- MaxPool2D / AvgPool2D (Max Pooling / Average Pooling)

- GlobalMaxPool / GlobalAvgPool (Global Max Pooling / Global Average Pooling)

- BatchNormalization (Batch Normalization)

- LayerNormalization (Layer Normalization)- MaxPool2D / AvgPool2D: Take the maximum value (MaxPool) or average value (AvgPool) within a local area (such as a 2x2 window). Mainly used to reduce the spatial size (width and height) of the feature map, reduce the amount of calculation, and enhance the position invariance of features.

- GlobalMaxPool / GlobalAvgPool: Take the maximum or average value for each channel of the entire feature map, and finally convert an HxWxC feature map directly into a 1x1xC vector for extracting global features.

- BatchNormalization: Normalize the data within a batch (subtract mean, divide by standard deviation) so that its mean is 0 and variance is 1. Then scale and offset through learnable parameters. This can accelerate model training convergence, alleviate gradient disappearance/explosion problems, and has a certain regularization effect. Used to stabilize the training process and accelerate convergence.

- LayerNormalization: Similar to batch normalization, but its normalization dimension is different. It normalizes all channels and spatial positions within a single sample. Performs better in sequence models (such as Transformer) and small batch training, used to normalize the features of each sample.

③ Activation Functions

Activation functions introduce non-linearity to neural networks, helping models learn complex feature representations.

- ReLU / ReLU6 / LeakyReLU (Rectified Linear Unit)

- Sigmoid / Tanh (S-shaped Function / Hyperbolic Tangent Function)

- Swish / Mish (Smooth ReLU / Mish Activation Function)

- Softmax (Softmax Function)- ReLU: Rectified Linear Unit, sets all negative values to 0 and keeps positive values unchanged. Commonly used in hidden layers, solving the gradient disappearance problem.

- ReLU6: Similar to ReLU, but limits the output to the range [0, 6], used for mobile deployment.

- LeakyReLU: An improvement on ReLU, solving the "Dead ReLU" problem. When the input is negative, it outputs a small non-zero value to avoid neuron "death".

- Sigmoid: Maps input to the range (0, 1), commonly used in the output layer of binary classification problems.

- Tanh: Maps input to the range (-1, 1), similar to Sigmoid, but with a wider output range.

- Swish: A smooth activation function, formula f(x) = x * sigmoid(x), performs well in some models.

- Mish: A new activation function, formula f(x) = x * tanh(softplus(x)), also performs well in some models.

- Softmax: Maps the input vector to a probability distribution, commonly used in the output layer of multi-classification problems. Converts each element to a probability value between 0 and 1, and the sum of all elements is 1.

④ Other Operators

The following are the basic operations and link operators necessary for building complex network structures.

- Add / Sub / Mul / Div (Basic Arithmetic Operations)

- Concat / Split (Concatenation and Splitting)

- Reshape / Transpose (Shape Transformation)

- MatMul / FullyConnected (Matrix Multiplication / Fully Connected Layer)- Add / Sub / Mul / Div: Correspond to addition, subtraction, multiplication, and division respectively.

- Concat: Used to merge multiple tensors, concatenating on the specified dimension.

- Split: Used to split a tensor into multiple sub-tensors, splitting on the specified dimension.

- Reshape: Used to change the shape of the tensor without changing the number of elements.

- Transpose: Used to swap the dimension order of the tensor.

- MatMul: Used for matrix multiplication, mainly used to build linear transformation layers in neural networks.

- FullyConnected: Used for fully connected layers, multiplying the input tensor with the weight matrix, then adding the bias term.

3 RKNN Software Stack Ecosystem Introduction

3.1 RKNN Software Stack Architecture

Application Layer

├── Python Application (rknn-toolkit2)

├── C/C++ Application (rknnrt)

└── Android Application (RKNN API)

│

Framework Layer

├── RKNN-Toolkit2 (Model Conversion)

├── RKNN Runtime (Inference Engine)

└── RKNN API (Programming Interface)

│

Driver Layer

├── NPU Driver (Kernel Driver)

├── Memory Management (Memory Manager)

└── Power Management (Power Manager)

│

Hardware Layer

└── RK3568 NPU Hardware3.2 Core Component Introduction

1) RKNN-Toolkit2

The core function of RKNN-Toolkit2 is to serve as a bridge for model conversion and deployment. It supports converting models trained by various mainstream frameworks (such as TensorFlow, PyTorch, ONNX, etc.) into dedicated RKNN format. During the conversion process, the tool automatically performs quantization and graph optimization, significantly improving model inference efficiency on NPU. At the same time, it provides model simulation and performance analysis functions, facilitating developers to verify model effects and running speed before deployment. The tool supports running on Windows, Linux, and macOS systems, with good cross-platform compatibility.

Supported Frameworks:

# Supported input formats

- TensorFlow / TensorFlow Lite

- PyTorch / ONNX

- Caffe / Caffe2

- MXNet

- Darknet2) RKNN Runtime

Core Functions:

- Efficient model inference engine

- Memory management and optimization

- Multi-threading support

- Hardware resource scheduling

To meet the needs of different development scenarios, RKNN Runtime provides multi-level API language support. For embedded deployment scenarios pursuing extreme performance and low latency, C/C++ API is the best choice, providing the most direct and efficient low-level control. In the stage of rapid algorithm prototype verification, scientific research, and script development, Python API is favored for its concise syntax and fast iteration capability, greatly improving development convenience. In addition, for application development on the Android platform, it also provides Java API, allowing developers to easily integrate AI functions into existing Android applications.

3.3 Development Toolchain

PC Side Tools

# RKNN-Toolkit2 Installation

pip install rknn-toolkit2

# Model Conversion Tool

rknn-toolkit2-convert

# Performance Analysis Tool

rknn-toolkit2-profilerBoard Side Runtime

# RKNN Runtime Library

librknnrt.so

# Python Bindings

rknnlite

# Example Program

rknn_demo3.4 Ecosystem Support

- GitHub Repository: https://github.com/rockchip-linux/rknn-toolkit2

- Development Documentation: Complete API documentation and user guide

- Sample Code: Demos covering various application scenarios

- Model Zoo: Pre-trained models and conversion scripts

RK3568 NPU has a mature development ecosystem led by officials and active in the community. Its core resources are concentrated on the official GitHub repository (rockchip-linux/rknn-toolkit2), which provides a complete software development kit, including detailed API documentation, user guides, sample codes covering image classification, object detection, semantic segmentation, and other applications, as well as a constantly updated model library containing a large number of pre-trained RKNN models and conversion scripts to help developers get started quickly.

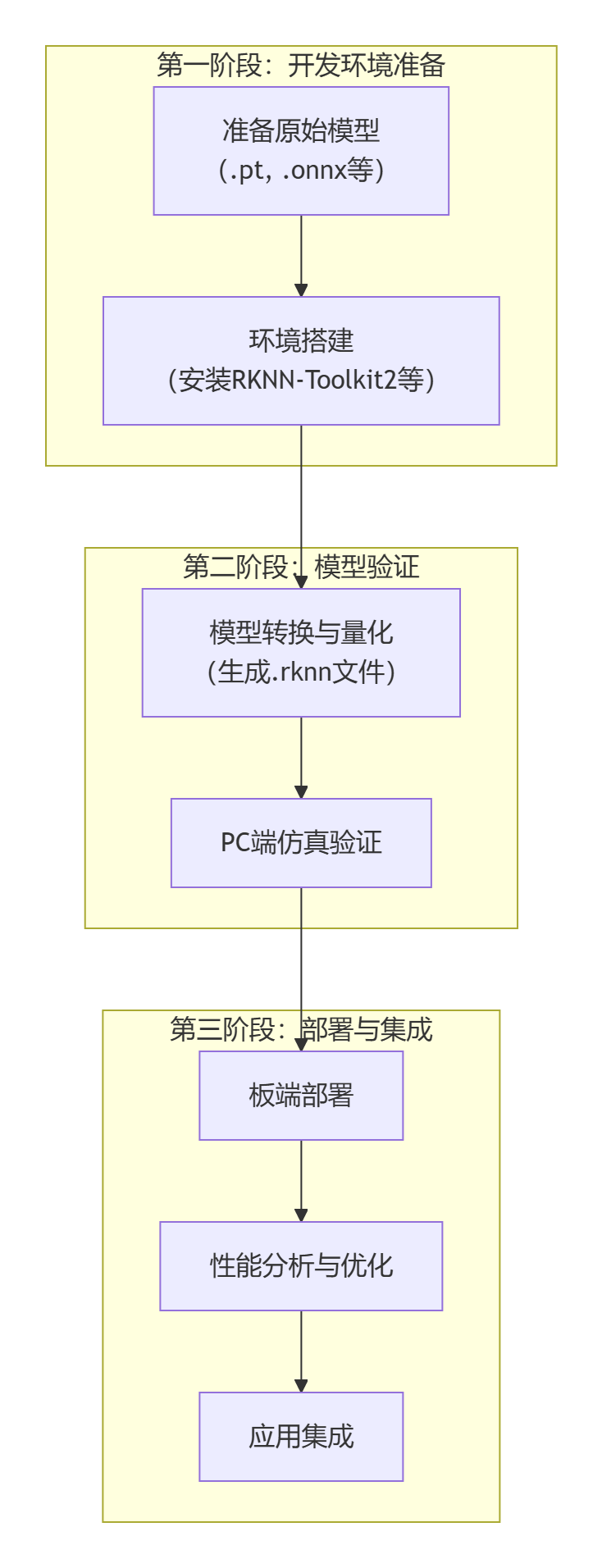

4 Development Process Overview

First is the first stage "Development Environment Preparation". Here, two basic tasks need to be completed: one is to prepare the original model files (such as .pt or .onnx format) trained and exported by mainstream frameworks such as PyTorch or TensorFlow, and the other is to configure the core model conversion toolchain, i.e., install the RKNN-Toolkit2 software development kit and its related dependency environment.

Next, enter the second stage "Model Verification". This is a key link to ensure that the model can run correctly and efficiently. First, use RKNN-Toolkit2 to convert the original model into the NPU-specific RKNN format. This process usually includes key quantization steps aimed at optimizing model size and inference speed. Subsequently, perform simulation verification on the PC side to quickly verify the functional correctness and basic performance of the converted model without connecting to actual hardware, greatly improving development and debugging efficiency.

Finally is the third stage "Deployment and Integration". Deploy the verified RKNN model to the target hardware. This stage first performs board-side deployment to ensure that the model is correctly loaded in the real environment; then, through performance analysis and optimization, finely adjust parameters to fully release the computing power of the NPU; finally, integrate the optimized model into the final application to complete the implementation of the entire AI solution.

5 Summary

The NPU of RK3568 provides powerful computing capabilities and complete software ecosystem support for edge AI applications. Through the RKNN software stack, developers can easily deploy various deep learning models to the GM-3568JHF development board to achieve efficient AI inference applications.

The following chapters will detail specific operation steps such as development environment setup, official example running, model conversion, and custom model deployment, helping you quickly get started with RK3568 NPU development.